Upstash Kafka Setup

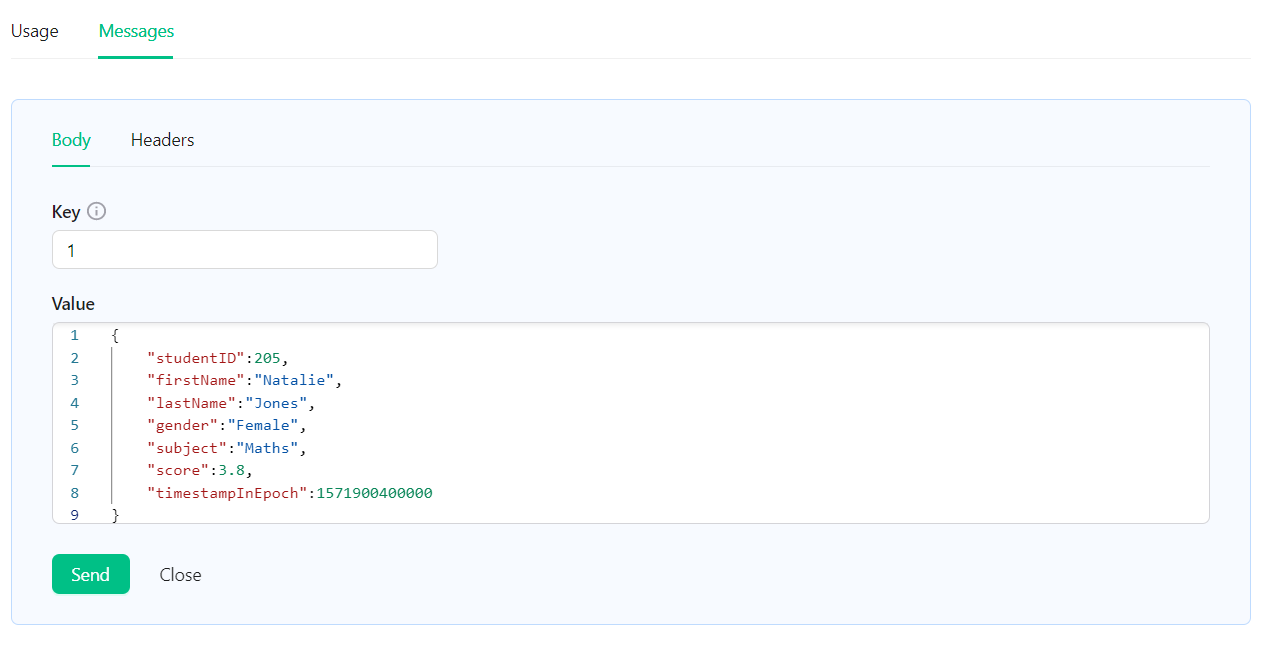

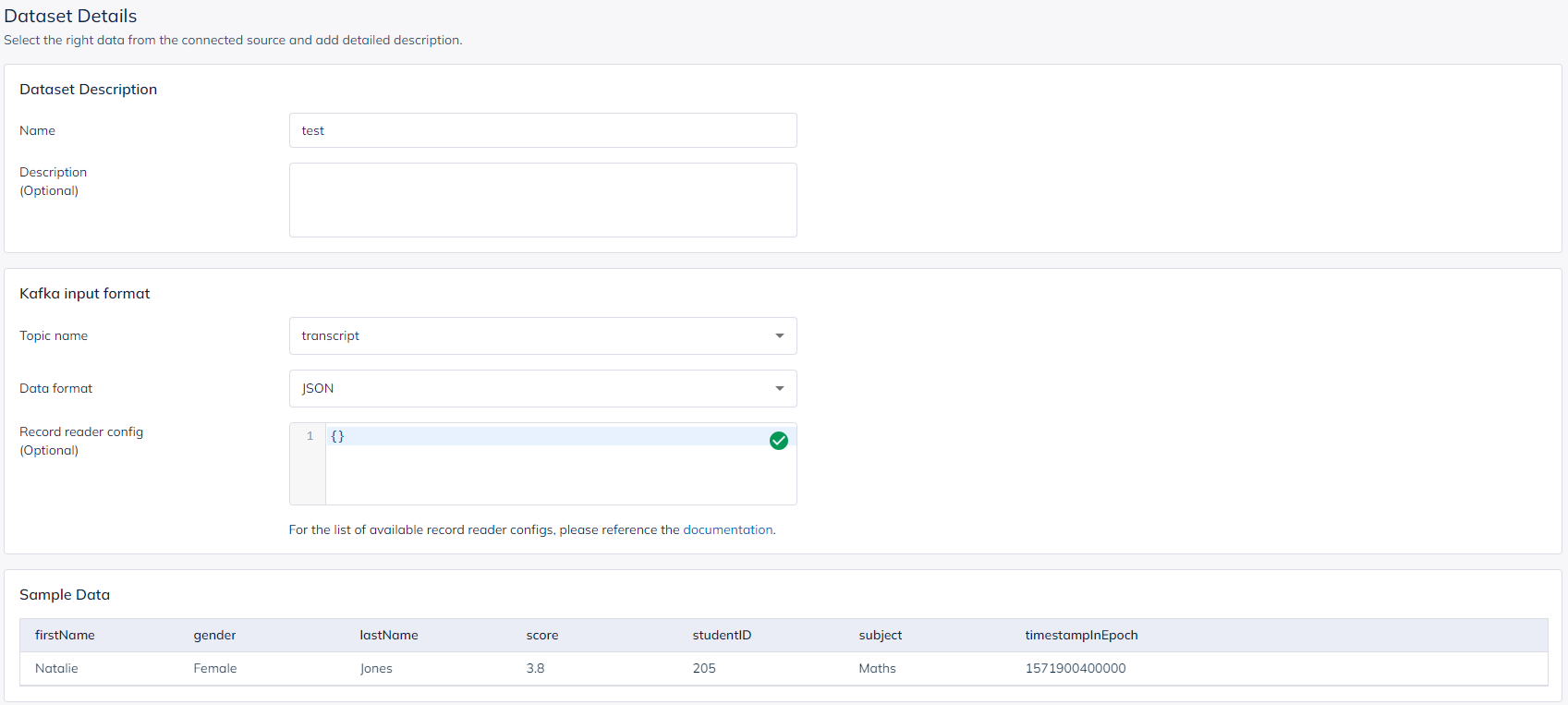

Create a Kafka cluster using Upstash Console or Upstash CLI by following Getting Started. Create one topic by following the creating topic steps. This topic will be the source for the Apache Pinot table running on StarTree. Let’s name it “transcript” for this example tutorial.StarTree Setup

To be able to use StarTree cloud, you first need to create an account. There are two steps to initialize the cloud environment on StarTree. First, you need to create an organization. Next, you need to create a workspace under this new organization. For these setup steps, you can also follow StarTree quickstart.Connect StarTree Cloud to Upstash Kafka

Once you created your workspace, open Data Manager under theServices section

in your workspace. Data Manager is where we will connect Upstash Kafka and work

on the Pinot table.

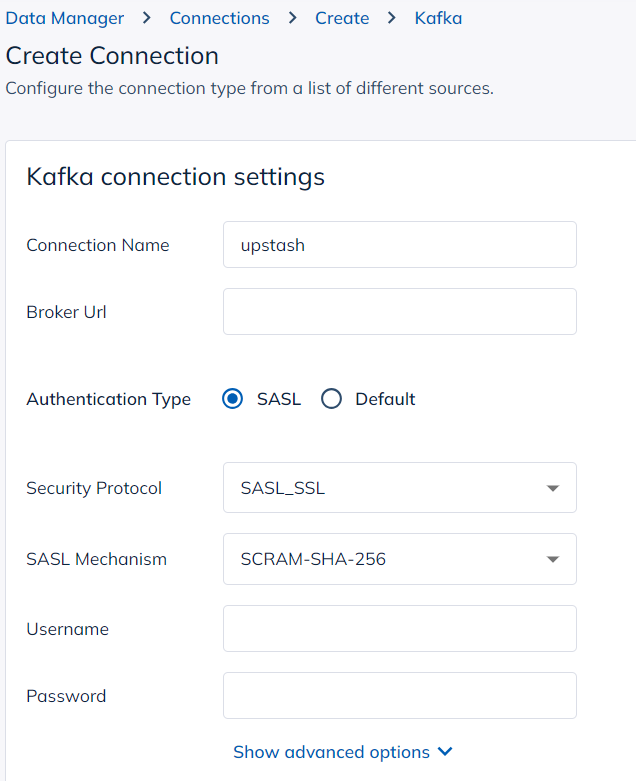

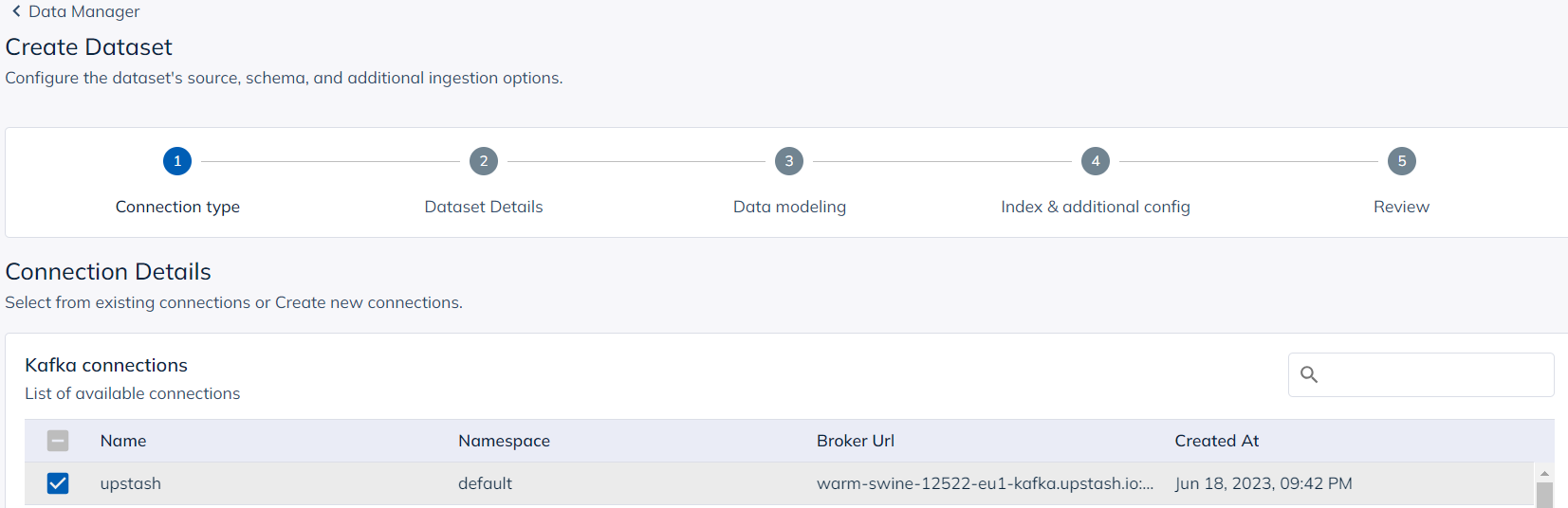

To connect Upstash Kafka with StarTree, create a new connection in Data Manager.

- Connection Name: It can be anything. It is up to you.

- Broker Url: This should be the endpoint of your Upstash Kafka cluster. You can find it in the details section in your Upstash Kafka cluster.

-

Authentication Type:

SASL -

Security Protocol:

SASL_SSL -

SASL Mechanism:

SCRAM-SHA-256 - Username: This should be the username given in the details section in your Upstash Kafka cluster.

- Password: This should be the password given in the details section in your Upstash Kafka cluster.

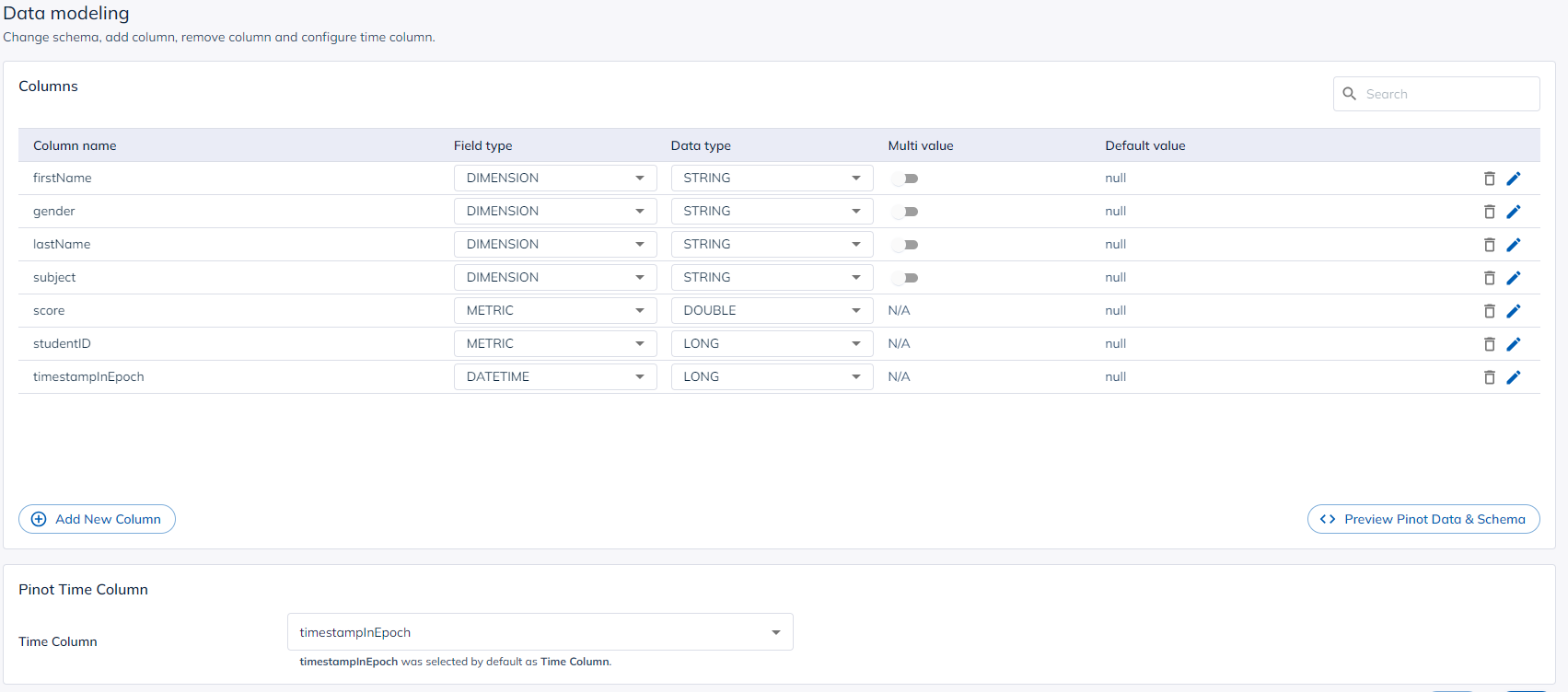

Query Data

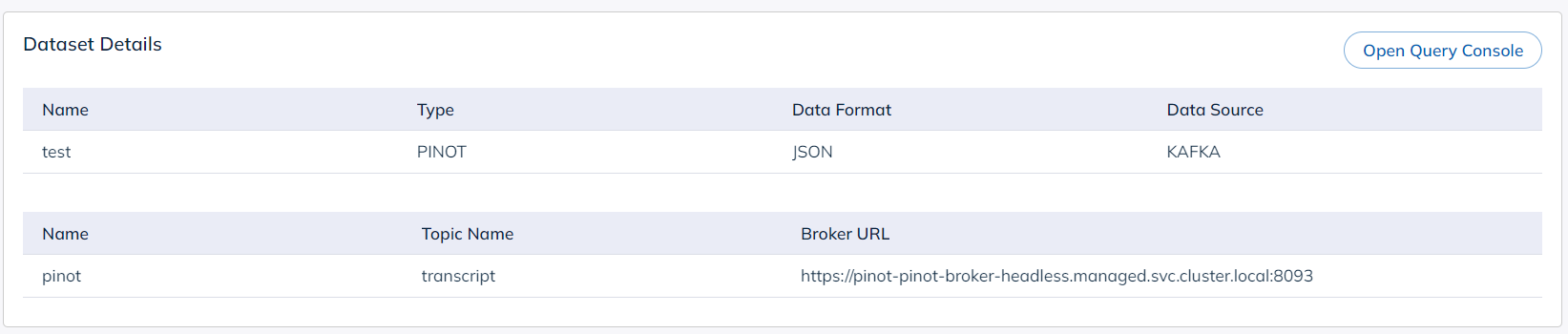

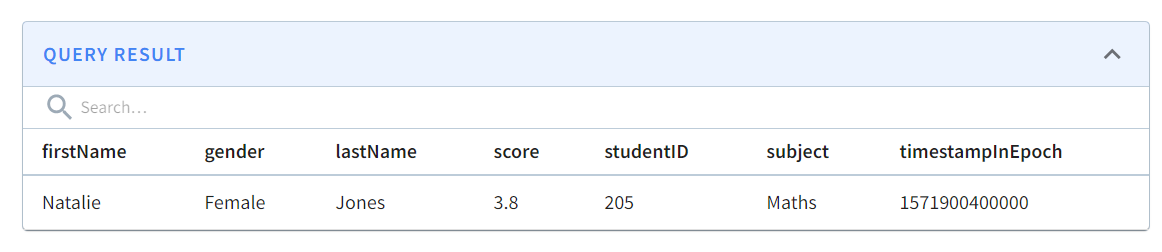

Open the dataset you created on StarTree Data Manager and navigate to the query console.